Work Pools and Workers: Deploy Python Securely

Writing great Python code is one thing. Getting this Python code to run reliably, efficiently and securely in a production environment is another story. This process is known as “deploying your code” or just “deployment”. To deploy Python code well, you will need to consider the type of infrastructure your code will run on, as well as the level of control you will need over this infrastructure. This becomes increasingly important as your workloads scale.

Deploying Python at scale within a serious business operation means dealing with varying infrastructure and security requirements. You can’t simply deploy all your Python scripts without paying careful attention to the kind of infrastructure they are being run on, at least not without being wasteful or insecure. Your operations department might have very different infrastructure needs from your ML teams, for example, or you may need to define security policies and track infrastructure costs separately per team or user. Work Pools and workers give you the granular control and flexibility you need to run jobs efficiently and securely.

![]()

Deploy Python with Prefect

Prefect allows you to deploy Python code in a more secure, robust manner by simplifying the way you manage your infrastructure. Are you orchestrating jobs that run frequently with light infrastructure requirements? Then a simple long-running terminal process that executes your jobs might be enough. But what if you’re trying to run infrequent jobs that need heavy infrastructure and custom environment variables per run? In that case, you will need a way to quickly spin up the required hardware, configure the environment correctly per job and then spin it all down again after the job has completed.

There are many different ways to deploy your Python code with Prefect:

- using a simple, long-running terminal process on the same machine that executes your code,

- on external infrastructure managed by Prefect for ease of use,

- on external infrastructure that you manage yourself for maximum control and flexibility.

Each of these solutions is designed for particular situations and there is no one-size-fits-all. There are important trade-offs to consider between the complexity of your deployment and the performance, control and security you want to achieve. This article will walk you through each solution. At each step you will learn when and how to use each solution so you can confidently deploy your Python code in the right sweet spot between complexity and performance. You can also go straight to the code example.

Let’s jump in! 🤿

![]()

What is a Work Pool?

A Work Pool is a temporary store in which Python work to be executed is collected. This work is created as part of an orchestration flow, which is code logic that defines steps in a workflow. Work to be executed is pushed to the Work Pool by a Prefect Flow script and can then be fetched and executed by other processes, potentially running on other machines.

Work Pools give you the flexibility to provision and configure infrastructure dynamically by decoupling your orchestration flow from your infrastructure. Jobs can be triggered on one machine but executed on another. This gives you more control and flexibility over the type of infrastructure your deployed Python code is run on.

![]()

What’s the difference between a worker and a Work Pool?

A Work Pool is a channel in which orchestration work that needs to be executed is collected. A worker is a light-weight polling process that listens to the Work Pool to fetch new work to be executed. The worker itself is where work actually gets executed. In a pull Work Pool, the worker will spin up infrastructure for each flow run.

Types of Work Pools

Prefect provides three different types of Work Pools, each designed for particular use cases and situations. We list them here in increasing order of complexity and infrastructure control:

- Managed Work Pools

- Push Work Pools

- Pull Work Pools

Let’s look at each type of Work Pool in some more detail:

Managed Work Pools

Managed Work Pools are administered by Prefect and handle the submission and execution of code on your behalf. Prefect handles provisioning and managing your infrastructure for you. This approach is great when you want to think as little about deploying as possible - particularly when your flow needs to run infrequently on ephemeral infrastructure and you are happy for this infrastructure to be fully managed by Prefect.

📓 Ephemeral is just a technical term for ‘temporary’ and refers to infrastructure that is turned on when you need it and turned back off when the computations have completed.

Managed Work Pools can be a great option for running a clean-up job to reset or clear some heavy infrastructure that’s stuck in a restarting state. If you try to do this with your own infrastructure, then that infrastructure can go into an eternally restarting state and have the same issue. If you do it with a managed Work Pool, then Prefect will manage it for you – and can get you back on track.

Push Work Pools

Push Work Pools can submit flow runs directly to your own serverless infrastructure providers such as Google Cloud Run, Azure Container Instances, and AWS ECS. They are called “push” Work Pools because Prefect pushes work to your remote infrastructure.

This approach is great when your code needs to run infrequently on ephemeral infrastructure and you would rather run it on your own serverless infrastructure for cost, security or observability reasons. For example, you might want to consider using a push Work Pool for large ETL jobs that are only run once a day.

Worker-based Work Pools

Worker-based Work Pools listen to the Work Pool for flow runs to execute. They require a worker: a light-weight polling process that listens to the Work Pool and pulls work that needs to be executed from the Prefect Work Pool. Because of this they are also referred to as “pull Work Pools” – try to say that 5 times in a row ;)

This approach is great when your flow needs to run infrequently on ephemeral infrastructure and you need even more control of your infrastructure, for example to deploy in your own Docker containers or Kubernetes clusters or to spin up unique infrastructure per flow run. Consider using a worker-based Work Pool for jobs that require a lot of memory or compute power and need to scale accordingly. Pull Work Pools are also useful for background tasks that are triggered from an application and can happen as often as once a minute.

💡 What’s the difference between a push vs pull Work Pool? A push Work Pool submits flows directly to your own serverless infrastructure. Infrastructure is ephemeral but cannot be customized per run. A pull Work Pool uses a worker process to pull work from the pool to the infrastructure. The worker can spin up custom infrastructure for each run.

When should I use a Work Pool?

Let’s work through a code example to show Work Pools and workers in action. By working through this code, you will gain an understanding of when you should consider using a Work Pool, as well as what type of Work Pool or worker to use.

The diagram below summarizes when you should use which Work Pool. Feel free to take a look at it before diving into the code and then referring back to it whenever you need an overview.

![]()

📓 To get the most out of these examples, it’s helpful if you understand basic Prefect concepts like Flows and Tasks. If you are new to Prefect, you may want to give the quickstart a try first.

To fully understand the function of a Work Pooll we’ll start by looking at a workflow that does not use one at all.

Deploying without a Work Pool

Imagine you have a Python script with some code that you would like to run regularly on some predefined schedule. The simplest way to deploy your Python code with Prefect is using the .serve() method.

After defining your Prefect @flow object (which is just a decorator on a Python function), you can simply call flow.serve() in your main Python method to create a deployment.

For example, let’s take this simple flow which fetches some statistics about a Github repository.

import httpx

from prefect import flow

@flow(log_prints=True)

def get_repo_info(repo_name: str = "PrefectHQ/prefect"):

url = f"https://api.github.com/repos/{repo_name}"

response = httpx.get(url)

response.raise_for_status()

repo = response.json()

print(f"{repo_name} repository statistics 🤓:")

print(f"Stars 🌠 : {repo['stargazers_count']}")

print(f"Forks 🍴 : {repo['forks_count']}")

By adding a call to flow.serve() at the end of the script, you can turn this into a deployed workflow so that you can automate the runs:

if __name__ == "__main__":

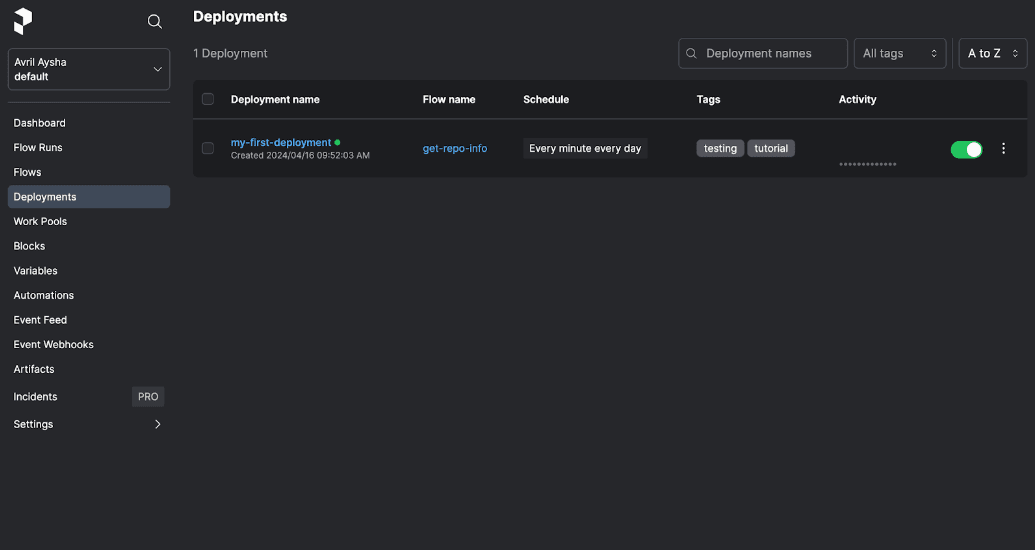

get_repo_info.serve(name="my-first-deployment")flow.serve() takes a number of arguments to further control your deployment, for example adding a cron schedule as well as tags and description metadata:

if __name__ == "__main__":

get_repo_info.serve(

name="my-first-deployment",

cron="* * * * *",

tags=["testing", "tutorial"],

description="Given a GitHub repository, logs repository statistics for that repo.",

version="tutorial/deployments",

)Run the Flow using:

python repo_info.py

Running this script will:

- create a deployment called "my-first-deployment" in the Prefect API, scheduled to run every minute and with the tags “testing” and “tutorial”

- stay running to listen for flow runs for this deployment; when a run is found, it will be asynchronously executed within a subprocess

**💡 **Using flow.serve() will deploy your code on the same machine that triggers the orchestration job. Because you do not have a Work Pool, work will be triggered and executed on the same machine, as we saw at the start of the article in Diagram 1.

Run flows remotely with a managed Work Pool

But what if your flow requires some heavy computation and you would like to have it run on some more advanced (and expensive!) infrastructure? You could run the Prefect script with flow.serve() directly on the remote infrastructure but that would probably be wasteful, especially if the flow runs less frequently. Your expensive infrastructure would be costing money but sitting idle most of the time.

Prefect managed Work Pools allow you to run flows on dynamically provisioned, ephemeral hardware. This means you can run your Prefect script on your local machine and run the actual computations of your flow on remote infrastructure.

With a managed Work Pool, your Prefect script will add work that needs to be executed to a Work Pool. Prefect then spins up the necessary resources for you and will execute the runs there. You do not need to worry about any infrastructure setup, maintenance, or shutting down.

To run the same get_repo_info flow using a Prefect managed Work Pool, change flow.serve() to flow.deploy() and supply the required arguments:

if __name__ == "__main__":

get_repo_info.from_source(

source="https://github.com/discdiver/demos.git",

entrypoint="repo_info.py:get_repo_info"

).deploy(

name="my-first-deployment",

work_pool_name="my-managed-pool",

)In the from_source method, we specify the source of our flow code. In the deploy method, we specify the name of our deployment and the name of the Work Pool that we created earlier.

Before running this script, you will need to create the my-managed-pool Work Pool. You can do this from the Work Pools tab in Prefect Cloud or by running the following command in your terminal:

prefect work-pool create my-managed-pool --type prefect:managedThis creates a Work Pool called my-managed-pool of type prefect:managed.

You can now run the script again to register your deployment on Prefect Cloud:

python repo_info.pyYou should see a message in the CLI that your deployment was created with instructions for how to run it:

Successfully created/updated all deployments!

Deployments

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━┳━━━━━━━━━┓

┃ Name ┃ Status ┃ Details ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━╇━━━━━━━━━┩

│ get-repo-info/my-first-deployment | applied │ │

└───────────────────────────────────┴─────────┴─────────┘

To schedule a run for this deployment, use the following command:

$ prefect deployment run 'get-repo-info/my-first-deployment'

You can also run your flow via the Prefect UI: https://app.prefect.cloud/account/

abc/workspace/123/deployments/deployment/xyz💡 Managed Work Pools give you ephemeral infrastructure to deploy code on without any of the headache of provisioning or maintaining it. Use managed Work Pools when you need to run flows remotely (not on the machine that triggers the flow) and you don’t want to have a long-running process like flow.serve() sitting idle. In Prefect Cloud, you have a limited number of compute hours with managed Work Pools which are good for cleanups jobs or small processes.

More control with push Work Pools

Many users will find that they need more control over the infrastructure that their flows run on. Serverless push Work Pools can be a great choice in this case: they scale infinitely and provide more configuration options than Prefect managed Work Pools. Your push Work Pool will store information about what type of infrastructure your flow will run on, what default values to provide to compute jobs, and other important execution environment parameters, such as Python packages etc.

Setting up the cloud provider pieces for infrastructure can be tricky and time consuming. Fortunately, Prefect can automatically provision infrastructure for you in your cloud provider of choice and wire it all together to work with your push Work Pool.

To use a push Work Pool, you will need an account with sufficient permissions on the cloud provider that you want to use. We'll use GCP for this example. Docker is also required to build and push images to your registry. Make sure to Install the gcloud CLI, authenticate with your GCP project and activate the Cloud Run API.

Run the following command to set up a Work Pool named my-cloud-run-pool of type cloud-run:push:

You should see something like this in your terminal, indicating that the infrastructure is being provisioned:

![]()

To run your get_repo_info Flow with your GCP push Work Pool, update your call to deploy() with the following information:

from prefect.deployments import DeploymentImage

if __name__ == "__main__":

get_repo_info.from_source(

source="https://github.com/discdiver/demos.git",

entrypoint="repo_info.py:get_repo_info"

).deploy(

name="my-deployment",

work_pool_name="above-ground",

cron="0 1 * * *",

image=DeploymentImage(

name="my-image:latest",

platform="linux/amd64",

)

)Run the script to create the deployment on the Prefect Cloud server. Note that this script will build a Docker image with the tag <region>-docker.pkg.dev/<project>/<repository-name>/my-image:latest and push it to your repository.

See the Push Work Pool guide for more details and example commands for each cloud provider.

Fully custom infrastructure with pull Work Pools and workers

Managed Work Pools and push Work Pools are limited by the fact that they use the same infrastructure for all your flow runs. If you need even more fine-grained control of your infrastructure, you may want to consider using a pull Work Pool with a client-side worker. Pull Work Pools with workers allow you to run each flow on its own, separately-defined infrastructure, including in your own Docker containers or Kubernetes clusters.

![]()

In your terminal, run the following command to set up a Docker type Work Pool:

prefect work-pool create --type docker my-docker-pool

Navigate to the Work Pools tab and click into my-docker-pool. You should see a red status icon signifying that this Work Pool is not ready.

![]()

To make the Work Pool ready, you need to start a worker:

prefect worker start --pool my-docker-pool

You should see the worker start.

Worker 'DockerWorker 953c966e-38e5-45ac-8116-d50300a5b1e8' started!

It's now polling the Prefect API to check for any scheduled flow runs it should pick up and then submit for execution. You’ll see your new worker listed in the UI under the Workers tab of the Work Pools page and you should also be able to see a Ready status indicator on your Work Pool - progress!

![]()

You will need to keep this terminal session active for the worker to continue to pick up jobs. Since you are running this worker locally, the worker will terminate if you close the terminal. Therefore, in a production setting this worker should run as a daemonized or managed process.

Now you’re all set to move your workflow to your pull Work Pool. To do so, update your get_repo_info script:

if __name__ == "__main__":

get_repo_info.deploy(

name="my-first-deployment",

work_pool_name="my-docker-pool",

image="my-first-deployment-image:tutorial",

push=False

)

Now you can run the flow script:

python repo_info.pyPrefect will build a custom Docker image containing your workflow code that the worker can use to dynamically spawn Docker containers whenever this workflow needs to run.

Go ahead and launch a flow run!

prefect deployment run 'get_repo_info/my-deployment'Workers use a base job template to define the configuration passed to the worker for each flow run and the options available to deployment creators to customize worker behavior per deployment. You can configure the base job template when you create the Work Pool in the Prefect Cloud UI:

![]()

If you need to override the base job template for a specific flow run, you can further tweak worker configuration using the job_variables keyword. For example, here's how you can quickly set the image_pull_policy to be Never for this tutorial deployment without affecting the default value set on your Work Pool:

if __name__ == "__main__":

get_repo_info.deploy(

name="my-first-deployment",

work_pool_name="my-docker-pool",

job_variables={"image_pull_policy": "Never"},

image="my-first-deployment-image:tutorial",

push=False

)Great job! 🙌

Use Work Pools and Workers for Granular Control over your Infrastructure

This tutorial has given you hands-on experience with different approaches to deploying your orchestration code. These solutions range from simple terminal processes to managed Work Pools and custom infrastructure setups. Each of these deployment solutions is designed for specific scenarios, balancing complexity with performance and control. Now that you understand the various available solutions and their limitations, you can use Prefect to confidently build deployments that strike the right balance between complexity and efficiency to drive success in your business operations.

Prefect makes complex workflows simpler, not harder. Try Prefect Cloud for free for yourself, download our open source package, join our Slack community, or talk to one of our engineers to learn more.